The AI security paradox: what nobody warns you about multi-component architectures

Let me tell you what keeps me up at night

"Alla pratar om AI-möjligheter. Nästan ingen pratar om säkerhetskonsekvenserna." (Everyone talks about AI opportunities. Almost no one talks about the security consequences.)

I've watched organization after organization rush to deploy AI, connecting multiple services, sending data everywhere, and then being shocked - SHOCKED - when security problems emerge.

Here's the uncomfortable truth: The most effective AI implementations require the most complex security architectures. And most organizations aren't remotely prepared for this.

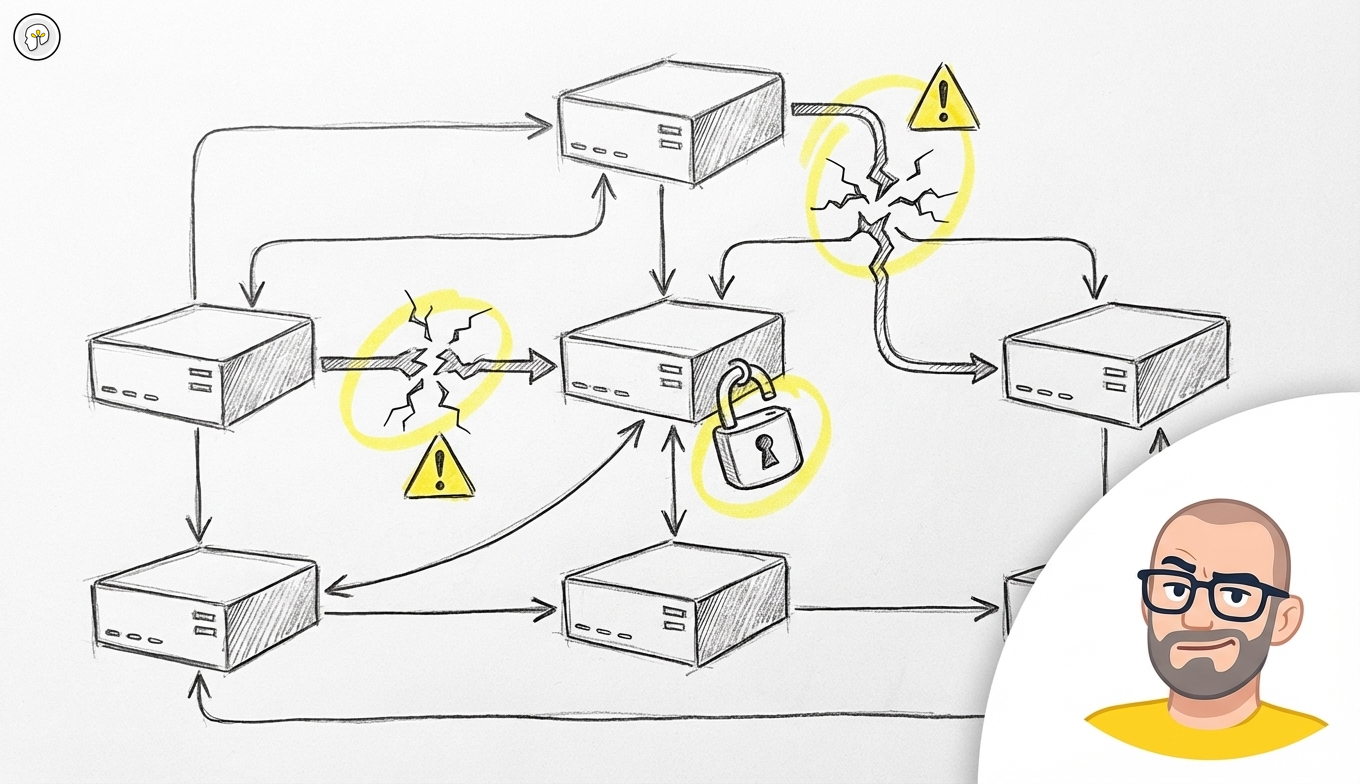

The paradox is real: building robust AI systems requires distributing trust across multiple vendors and platforms. Each connection is a potential security hole. Each vendor is a potential liability.

"En enskild modell räcker sällan. Det ger bättre effekt att koppla samman olika AI-lösningar för text, siffror och realtidsdata" (A single model is rarely sufficient. It gives better effect to connect different AI solutions for text, numbers and real-time data)

This architectural reality creates security challenges that go far beyond traditional software system protection. Organizations must now manage data security across multiple AI services, each with different privacy policies, storage locations, and compliance requirements.

What AI security cannot guarantee

Before we go further, let me be clear about what no amount of architecture can fix:

- AI cannot keep secrets - If you send sensitive data to an AI service, it's been sent. Period.

- Vendors cannot un-train - Data already used for training cannot be removed from models

- Compliance cannot be automated - GDPR, AI Act, and sector regulations require human judgment

- Zero trust is still trust - Every architecture assumes SOME level of trust somewhere

- Security costs money - There's no free lunch. Secure AI costs more.

"Säkerhet är inte en produkt. Det är en process - och den tar aldrig slut." (Security is not a product. It's a process - and it never ends.)

The multi-component necessity

Modern AI systems cannot deliver production-quality results using single models because different AI technologies excel at different tasks. Language models handle text understanding and generation effectively but struggle with numerical analysis and real-time data processing. Specialized analytics models excel at statistical processing but cannot generate human-readable explanations. Real-time models provide current information but lack deep reasoning capabilities.

"Skillnaden mellan språkmodeller, generiskt innehåll och dataanalys" reflects the fundamental reality that "en språkmodell i grunden är tränad för att tolka och producera språk – det vill säga text, konversationer och sammanhang" (a language model is fundamentally trained to interpret and produce language - that is, text, conversations and context)

This specialization forces organizations to build systems that combine multiple AI services, each introducing its own security considerations. A typical production AI system might use one service for text processing, another for numerical analysis, and a third for real-time data updates. Each service potentially stores and processes sensitive business data in different locations under different security frameworks.

The geopolitical security reality

AI security decisions have become geopolitical issues that affect business operations in ways that traditional software security never did. Organizations find themselves evaluating AI vendors not just on technical capabilities but on national origin and data sovereignty implications.

"När asiatiska, och särskilt kinesiska, aktörer kommer på tal uppstår ofta en större tveksamhet, trots att privacy- och säkerhetsfrågorna i själva verket borde bedömas utifrån faktiska avtal och teknisk implementering, snarare än enbart ägarnas nationalitet" (When Asian, and especially Chinese, actors come up there is often greater hesitation, despite the fact that privacy and security issues should actually be assessed based on actual agreements and technical implementation, rather than just the owners' nationality)

This geopolitical dimension creates operational complexities for multinational organizations. Different regions have different restrictions on AI vendor usage. European organizations face GDPR compliance challenges when using AI services that store data outside the EU. American organizations must navigate restrictions on certain foreign AI providers. Asian organizations may face limitations on Western AI services.

The security evaluation process becomes more complex because organizations must assess not just technical security measures but also political stability, regulatory compliance across multiple jurisdictions, and potential future restrictions on vendor relationships.

The European compliance challenge

European organizations face particularly complex AI security challenges due to stringent data protection requirements that weren't designed with AI systems in mind.

"Juridiska risker med att använda AI-plattformar som lagrar data utanför EU" (Legal risks with using AI platforms that store data outside the EU)

GDPR compliance becomes exponentially more complex with multi-component AI architectures. Each AI service potentially processes personal data, requiring separate data processing agreements, privacy impact assessments, and compliance monitoring. Organizations must track how data flows between AI components, where it's stored at each stage, and how long it's retained by each service.

The compliance challenge is compounded by the fact that AI services often use data for model improvement, which may not align with GDPR's purpose limitation principle. Organizations must negotiate specific clauses with each AI vendor to prevent unauthorized data usage while maintaining the functionality that makes multi-component AI systems effective.

The data sovereignty imperative

As AI systems become more critical to business operations, data sovereignty becomes a strategic concern rather than just a compliance issue.

"Var data hamnar och hur den lagras är minst lika viktigt som modellens prestanda" (Where data ends up and how it's stored is at least as important as the model's performance)

Organizations must balance AI capability with control over their data. This means evaluating not just where data is stored but how it's processed, who has access to it, and what happens to it after processing completes. Multi-component AI architectures complicate this evaluation because data may flow through multiple services in different jurisdictions.

The sovereignty imperative requires organizations to develop data classification systems that determine which types of information can be processed by which AI services. Highly sensitive data might only use on-premises or private cloud AI services, while less sensitive data might use public AI APIs for better performance and cost efficiency.

The security assessment evolution

Traditional software security assessments focus on code review, penetration testing, and infrastructure security. AI security requires additional evaluation criteria that many organizations aren't prepared to handle.

"Granska leverantörers policy och ägarstruktur" (Review suppliers' policies and ownership structure)

AI security assessment must include evaluation of vendor data usage policies and commitments not to use customer data for model training. This requires understanding how AI vendors separate customer data from training data and what technical measures prevent unauthorized access.

Organizations must also assess vendor stability and longevity because AI service dependencies are harder to replace than traditional software dependencies. Switching AI vendors often requires retraining users, updating prompts and workflows, and potentially rebuilding integrations. This lock-in effect makes vendor security and reliability assessment more critical than for traditional software purchases.

The architecture security framework

Successful AI security requires architectural approaches that assume multiple trust boundaries rather than relying on perimeter security models.

"Säkerställ dataflöden mellan komponenter" (Ensure data flows between components)

The framework must include data classification and routing rules that determine which types of information can be processed by which AI services. This requires technical implementation of data filtering, encryption in transit between AI services, monitoring and logging of all AI service interactions, and automated compliance checking for data handling policies.

The architecture must also include fallback mechanisms that maintain security when AI services fail or become unavailable. This might involve local processing capabilities for sensitive operations or alternative AI services that meet higher security standards even if they provide lower performance.

The cost-security trade-off

Multi-component AI architectures force organizations to make explicit trade-offs between capability, cost, and security that aren't obvious from simple AI demonstrations.

Higher security typically means higher costs through private cloud deployments, dedicated instances, or on-premises AI solutions. It may also mean lower performance through restricted AI services that meet security requirements but offer less capability than public alternatives.

Organizations must develop frameworks for making these trade-offs systematically rather than defaulting to either maximum security or maximum capability. This requires understanding the business value of different types of AI capabilities and the actual risks associated with different security approaches.

The integration security patterns

Successful AI security implementation follows specific patterns that organizations can adapt to their specific requirements and risk tolerance.

The zero-trust pattern treats each AI service as potentially compromised and implements validation at every integration point. This includes encrypting data between AI services, validating AI outputs before using them in business logic, monitoring all AI service interactions for anomalies, and implementing automated incident response for security violations.

The layered security pattern implements different security levels for different types of data and operations. Highly sensitive operations use on-premises or private AI services with maximum security controls. Moderately sensitive operations use public AI services with enhanced monitoring and data handling agreements. Low-sensitivity operations use standard public AI services for maximum performance and cost efficiency.

The monitoring and compliance reality

AI security requires continuous monitoring that goes beyond traditional security metrics to include AI-specific indicators of compromise or compliance violations.

This includes monitoring for data leakage through AI service interactions, tracking compliance with data handling agreements across multiple vendors, detecting unusual patterns in AI service usage that might indicate security incidents, and measuring AI service reliability and availability for business continuity planning.

The monitoring framework must also include regular auditing of AI vendor compliance with security agreements and assessment of changing geopolitical restrictions that might affect AI service availability or compliance requirements.

Conclusion: security as architectural foundation

AI security cannot be an afterthought in multi-component architectures - it must be a foundational design principle that influences every architectural decision.

"Många av framtidens vinnare är de som lär sig kombinera olika AI- och ML-komponenter för att bygga flexibla och kraftfulla arbetsflöden" (Many of the future winners are those who learn to combine different AI and ML components to build flexible and powerful workflows)

The winners will be organizations that master the complexity of secure multi-component AI architectures rather than those that avoid AI due to security concerns or those that ignore security for the sake of AI capability.

The key insight is that AI security requires the same systematic thinking and architectural discipline that organizations apply to other critical business systems. Security becomes more complex with AI, but it's manageable with proper framework and systematic implementation.

Vendor security comparison matrix

When evaluating AI vendors for multi-component architectures, organizations must assess multiple dimensions beyond technical capability:

| Provider | Training data policy | EU hosting | GDPR-ready DPA | SOC 2 | Ownership |

|---|---|---|---|---|---|

| OpenAI | Opt-out (consumer), No (enterprise/API) | Via Azure only | Yes (enterprise) | Yes | US (Microsoft stake) |

| Anthropic (Claude) | Never trains on user data | No (US only) | Yes | Yes | US |

| Google (Gemini) | Opt-out (consumer), No (Workspace/Vertex) | Yes (Vertex AI) | Yes | Yes | US |

| Microsoft (Azure OpenAI) | No | Yes | Yes | Yes | US |

| Mistral | No (API) | Yes (EU-native) | Yes | Pending | France/EU |

Vendor selection by use case

Maximum EU compliance: 1. Microsoft Azure OpenAI (EU region) 2. Google Vertex AI (EU region) 3. Mistral (EU-native)

Best privacy policies: 1. Anthropic Claude (never trains) 2. Azure OpenAI (enterprise controls) 3. Google Vertex AI (enterprise controls)

Swedish organization recommendations: - Start with Microsoft if already using M365/Azure - Consider Google if using Workspace - Use Claude for highest-sensitivity analysis (accept US hosting) - Evaluate Mistral for EU-sovereign requirements

Swedish and Nordic-specific considerations

Regulatory landscape

Swedish organizations face a specific regulatory context:

Offentlighetsprincipen (Public access principle): - Public sector organizations must consider if AI interactions become public records - Document what data is sent to AI services - Implement logging for transparency requirements

Arbetsmiljölagen (Work Environment Act): - AI implementation affects work conditions - Involve unions/safety representatives in AI deployment decisions - Document AI's role in decision-making processes

Branschspecifika krav (Industry-specific requirements): - Banking (Finansinspektionen): Additional requirements for AI in financial decisions - Healthcare (IVO): Patient data cannot use standard AI services - Defense (FMV): Restricted AI vendor list

Data residency options for Swedish organizations

| Requirement level | Recommended approach | Trade-offs |

|---|---|---|

| Standard business data | Azure OpenAI (Sweden/EU) or Google Vertex (EU) | Full capability, standard compliance |

| Personal data (GDPR) | Azure OpenAI with DPA, EU region only | Some latency, higher cost |

| Sensitive categories (Art. 9) | On-premises solutions or no AI | Significant capability loss |

| Classified (Sekretess) | Swedish sovereign solutions only | Very limited AI options |

Praktiska rekommendationer för svenska organisationer

- Klassificera data först - Innan AI-adoption, bestäm vilka datatyper som kan processas var

- Etablera DPA med varje leverantör - Standardvillkor räcker inte för GDPR

- Dokumentera dataflöden - Var går datan? Vem har access? Hur länge sparas den?

- Involvera juridik tidigt - AI-säkerhet är en juridisk fråga, inte bara teknisk

- Planera för förändring - Regulatoriska krav på AI kommer skärpas (AI Act 2025+)

Implementation checklist

Phase 1: Assessment

- [ ] Classify data types by sensitivity

- [ ] Map current and planned AI service usage

- [ ] Identify regulatory requirements (industry, geography)

- [ ] Document existing data flows and storage locations

Phase 2: Architecture design

- [ ] Define security tiers for different data types

- [ ] Select vendors meeting each tier's requirements

- [ ] Design data routing between components

- [ ] Plan fallback mechanisms for security failures

Phase 3: Implementation

- [ ] Negotiate DPAs with all AI vendors

- [ ] Implement technical controls (encryption, access control)

- [ ] Deploy monitoring and logging

- [ ] Train staff on security procedures

Phase 4: Operations

- [ ] Regular vendor compliance audits

- [ ] Continuous monitoring for anomalies

- [ ] Incident response procedures

- [ ] Periodic architecture review

Your responsibility

"Du är ansvarig för varje dataläcka, oavsett vad leverantören lovade." (You are responsible for every data leak, regardless of what the vendor promised.)

Let me be direct: vendors will not protect you. Terms of service are designed to limit THEIR liability, not protect YOU.

What you must accept: - Security is your problem, not the vendor's - "Enterprise-grade" is marketing, not a guarantee - Compliance checkboxes don't mean actual security - The cheapest option is never the most secure

What you must do: - Classify your data BEFORE choosing AI tools - Get legal sign-off for every vendor relationship - Monitor actual data flows, not just policies - Plan for breaches, not just prevention - Budget for security - it costs 20-50% more than insecure alternatives

Conclusion: security is not optional

Organizations that ignore AI security don't get to complain when breaches happen. Organizations that obsess over security without deploying don't get the benefits. The winners find the balance.

"Många av framtidens vinnare är de som lär sig kombinera olika AI- och ML-komponenter för att bygga flexibla och kraftfulla arbetsflöden" (Many of the future winners are those who learn to combine different AI and ML components to build flexible and powerful workflows)

But those winners will be the ones who did the security work FIRST, not as an afterthought.

The key insight: AI security requires MORE systematic thinking than traditional software security, not less. If you're not prepared for that, you're not ready for AI in production.

AI is a tool. Security is your responsibility. Verktyg, inte magi.

See also: AI Training Data Policies for detailed vendor data handling comparison.

Based on 30 years of production development and watching security failures unfold in real organizations Published: December 2025